Top>Research>Pedestrian measurement using subtraction stereo

Index

Index

Kazunori Umeda [Profile]

Education Cource

Pedestrian measurement using subtraction stereo

Kazunori Umeda

Professor of Intelligent Mechanics and Mechanical System, Intelligent Informatics, and Measurement Engineering, Faculty of Science and Engineering, Chuo University

Introduction

Pedestrian measurement technology is required for marketing and for surveillance systems which ensure a safe and secure society. This essay introduces research regarding pedestrian measurement using subtraction stereo, a theme addressed by my laboratory (Department of Precision Mechanics, Intelligent Sensing System Laboratory).

What is subtraction stereo?

Human beings are able to perceive three-dimensional images through stereo vision using two eyes (we also use input such as focus, movement and shade). This principle is also used in 3D televisions which have become popular recently. Similarly, in the case of mechanical eyes used in robots, it is possible to perceive three-dimensional images (to measure distance) by using 2 or more cameras. This is known as stereo vision, a topic which is heavily studied in fields such as photogrammetry, computer vision and robot vision. Currently, commercial devices are available which can be used through connection to a computer. There are even examples of stereo vision being installed in automobiles. The misalignment which occurs between images captured by 2 cameras (this misalignment is referred to as disparity) increases as distance becomes closer. In other words, misalignment is inversely proportional to distance. The use of this phenomenon to measure distance is the fundamental principle of stereo vision. Stereo vision can be easily explained in this manner, and people are able to view the world in stereo vision quite easily. However, it is not so simple to replicate stereo vision in machines. Actually, it is quite difficult to decide the corresponding relationship between a certain points within the image of one camera and another point within the image of another camera. This difficulty is referred to as the "correspondence problem."

Figure 1: Flow of processing in subtraction stereo

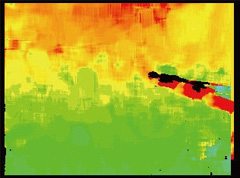

Continuing onward from my somewhat lengthy introduction, I would like to discuss subtraction stereo, a method of stereo vision studied at my laboratory. As shown in Figure 1, this method starts by taking images from 2 cameras and removing the background from both images in order to acquire the foreground region. Then, correspondence between the 2 camera images for each point is sought only for the remaining foreground region (known as stereo matching). The simplest way of acquiring the foreground image is to register the background image and then to subtract the background image from the image which is currently being viewed. This method is known as subtraction stereo. By using this method to limit processing to the foreground region, it is possible to restrict the search range for corresponding points. This results in a simplified correspondence problem and reduced calculation amount, both of which are characteristics of the subtraction stereo method. Furthermore, since the region in which distance can be acquired is limited, there is also the merit of simplifying subsequent processing. Figure 2 shows an example of an image captured by using subtraction stereo. This image was captured by installing a subtraction stereo algorithm into a commercial stereo camera (Bumblee2, manufactured by Point Grey Research). disparity is represented by color. As the color changes from red to blue, disparity becomes greater and the distance becomes smaller. It can clearly be seen how the disparity image captured using subtraction stereo is limited to the region of pedestrians. Incidentally, in the case of normal stereo vision, measurement mistakes are caused by incorrect correspondence in areas such as the white lines on the right. However, such mistakes are almost completely eliminated when using the subtraction stereo method.

Figure 2: disparity image captured using subtraction stereo

(a)Subject scene

(b)Subtraction stereo

(c)Normal stereo vision

Tracking of multiple individuals

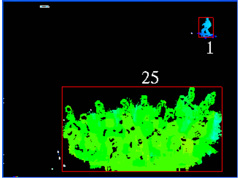

disparity is shown by each point in the disparity image in Figure 2. However, using this disparity, it is possible to calculate the distance from the camera and three-dimensional coordinate values as seen from the camera. (Distance or three-dimensional coordinate value images are known as " range images.") Therefore, it is easy to acquire the position, height and width of individual regions extracted from image (b) of Figure 2. Furthermore, it is possible to perform tracking of individuals through continued extraction conducted using images where individual regions are input into temporal sequences. My research laboratory performs this kind of individual tracking using a method known as Extended Kalman Filter. By creating a model (state equation) in which individuals perform linear uniform motion and then applying the Extended Kalman Filter, it is possible to track individuals in three-dimensional position while reducing the effect of measuring error for individual positions. During such tracking, there is no problem even if multiple individuals cross paths or are momentarily hidden while walking behind an object. Figure 3 shows an example of tracking performed for multiple individuals. Image (b) of Figure 3 is a bird's-eye view of tracking results for the scene shown in image (a) of Figure 3. It is clear how the motion of multiple individuals has been appropriately tracked.

Figure 3: Tracking of multiple individuals

(a)Subject scene

(b)Tracking results (bird's-eye view)

In addition to walking alone, it is also common for several people to walk together in a group. In such instances, the group is tracked as a single unit. It is also possible to estimate the number of people in the group from field area and distance information which is contained within the group image. An example is shown in Figure 4. During group tracking, the area within the image changes according to the angle from which the camera views the scene. Correction is performed to amend such differences. Additionally, the area also changes according to the degree of overlapping among people within the group. Evaluating the multiplicity of individuals is a rather difficult task. Currently, a multiplicity evaluation method is being constructed that uses distribution in three-dimensional space of distinct features extracted from a color image of the individual region. A method known as KLT (Kanade-Lucas-Tomasi Feature Tracker) is used to extract features from color images.

Figure 4: Estimating the number of people in a group

(a)Example of subject scene

(b)Result of scene (a)

(c)Result of a different scene

Measuring pedestrian traffic in crowded areas

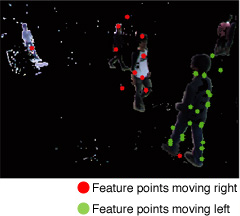

The methods described above track multiple individuals or groups assuming that the individual or group is somewhat separated within the image. As a result, it is difficult to apply such methods to scenes such as the Shibuya scramble intersection where people walk through crowded areas. Therefore, we constructed a method for performing pedestrian measurement in such crowded areas. This method does not attempt to separate each individual or group. Instead, the method estimates the number of people who are walking in each direction. A more detailed explanation of the method is shown in Figure 5. A foreground image extracted using background subtraction is used. First, the KLT method introduced above is used to extract feature from a color image (Figure 5 (b)). KLT is capable of perform tracking of feature points. From those tracking results, it is determined whether each feature point is moving to the right or left. Furthermore, a Voronoi diagram is constructed from those feature points (Figure 5 (c)). A single feature point is contained within each region of the Voronoi diagram. The direction of movement in the foreground region which is included in each region of the Voronoi diagram is the direction of movement for each feature point (Figure 5 (d)). The number of people moving to the left and right is obtained by calculating the region area and then dividing that area by the area per individual, all while considering distance information.

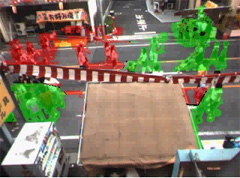

Figure 6 shows the results of an experiment using this method to measure pedestrian traffic at a festival in Tatebayashi City, Gunma Prefecture. The foreground region is acquired for scenes like Figure 6 (a). Example results of obtaining the movement direction for each field are shown in Figure 6 (b). Furthermore, based on such results, Figure 6 (c) and (d) show the results of using a temporal sequence to obtain the number of people moving both left and right. Although the differences are somewhat large compared to the actual number of people (visually counted by a student while looking at the image), results show that it is possible to calculate the approximate number of people.

One last point which I forgot to mention is that all methods discussed in this essay operate in real time.

Figure 5: Measuring pedestrian traffic in crowded areas

(a)Subject scene

(b)Extraction of feature points (red: right direction, green: left direction)

(c)Voronoi diagram

(d)Direction of movement in each field (red: right direction, green: left direction)

Figure 6: An example of measuring pedestrian traffic in crowded areas

(a)Experiment environment

(b)Direction of movement in each field (red: right direction, green: left direction)

(c)Number of people walking right

(d)Number of people walking left

Summary

This completes the introduction of my laboratory's research in pedestrian measurement methods which use subtraction stereo. I have explained the concept of subtraction stereo, and I have demonstrated a method for tracking multiple individuals or groups in environments which are not so crowded, as well as a method for measuring pedestrian flow in crowded environments. There are also many other aspects of stereo subtraction which I was not able to introduce in this essay. For example, it is also possible to increase the stability of stereo subtraction by constructing and combining a method for real-time updating of background images in a temporal sequence with a method for separating foreground regions and shadows. If you are interested in such methods, please visit my laboratory's homepage at http://www.mech.chuo-u.ac.jp/umedalab/![]() . Also of interest is the variety of research other than stereo subtraction which is conducted by the laboratory. For example, we are working to construct an intelligent room in which it is possible to operate home appliances using gestures, as well as to construct distance image sensors using multi-slit light for humanoid robots.

. Also of interest is the variety of research other than stereo subtraction which is conducted by the laboratory. For example, we are working to construct an intelligent room in which it is possible to operate home appliances using gestures, as well as to construct distance image sensors using multi-slit light for humanoid robots.

The research discussed in this essay was implemented in the project entitled "OSOITE -Overlay-network Search Oriented for Information about Town Events-" (research representative: Professor Yoshito Tobe of Tokyo Denki University). This project is conducted as part of the Core Research for Evolutional Science and Technology (CREST) program at the Japan Science and Technology Agency (JST). I would also like to offer my sincere thanks for cooperation received from Tatebayashi City and all related people during the experiment.

- Kazunori Umeda

Professor of Intelligent Mechanics & Systems, Human & Computer Intelligence, Metrology

Faculty of Science and Engineering, Chuo University - In 1989, graduated from the Department of Precision Machinery Engineering at the University of Tokyo Faculty of Engineering. In 1994, completed the Doctoral Program at the same department. The same year, assumed the position of lecturer in the Department of Precision Mechanics at the Chuo University Faculty of Science and Engineering. Assumed the position of associate professor at the same department in 1998. Has served as professor since 2006. From 2003 to 2004, served as a Visiting Worker on the National Research Council of Canada. From 2007 to 2009, served as a senior scientific research specialist for the Ministry of Education, Culture, Sports, Science and Technology. Involved in research for robot vision and image processing. Received the Nagao Prize at the Meeting on Image Recognition & Understanding (MIRU) 2004. Holds a PhD in engineering. Member of academic societies such as the Robotics Society of Japan, the Japan Society of Mechanical Engineers, the Japan Society for Precision Engineering, the Institute of Electronics, Information and Communication Engineers, and the Institute of Electrical and Electronics Engineers (IEEE).

- Research Activities as a Member of Research Fellowship for Young Scientists (DC1), Japan Society for the Promotion of Science (JSPS) Shuma Tsurumi

- Important Factors for Innovation in Payment Services Nobuhiko Sugiura

- Beyond the Concepts of Fellow Citizens and Foreigners— To Achieve SDGs Goal 10 “Reduce Inequality Within and Among Countries” Rika Lee

- Diary of Struggles in Cambodia Fumie Fukuoka

- How Can We Measure Learning Ability?

—Analysis of a Competency Self-Assessment Questionnaire— Yu Saito / Yoko Neha - The Making of the Movie Kirakira Megane